Marketing managers have always wanted to know the return on their marketing spend and to understand if their budgets have been spent wisely. But online, as marketing has become infinitely measurable, the question has become, "What are my marketing activities really worth?"

In order to determine the ROAS (Return on Advertising Spend), the usual measurement of conversions on the basis of last-touch attribution is no longer sufficient on its own. A measurement and analysis method that provides the complete picture has established itself in the ecosystem: incrementality testing.

A mobile measurement method rooted in science

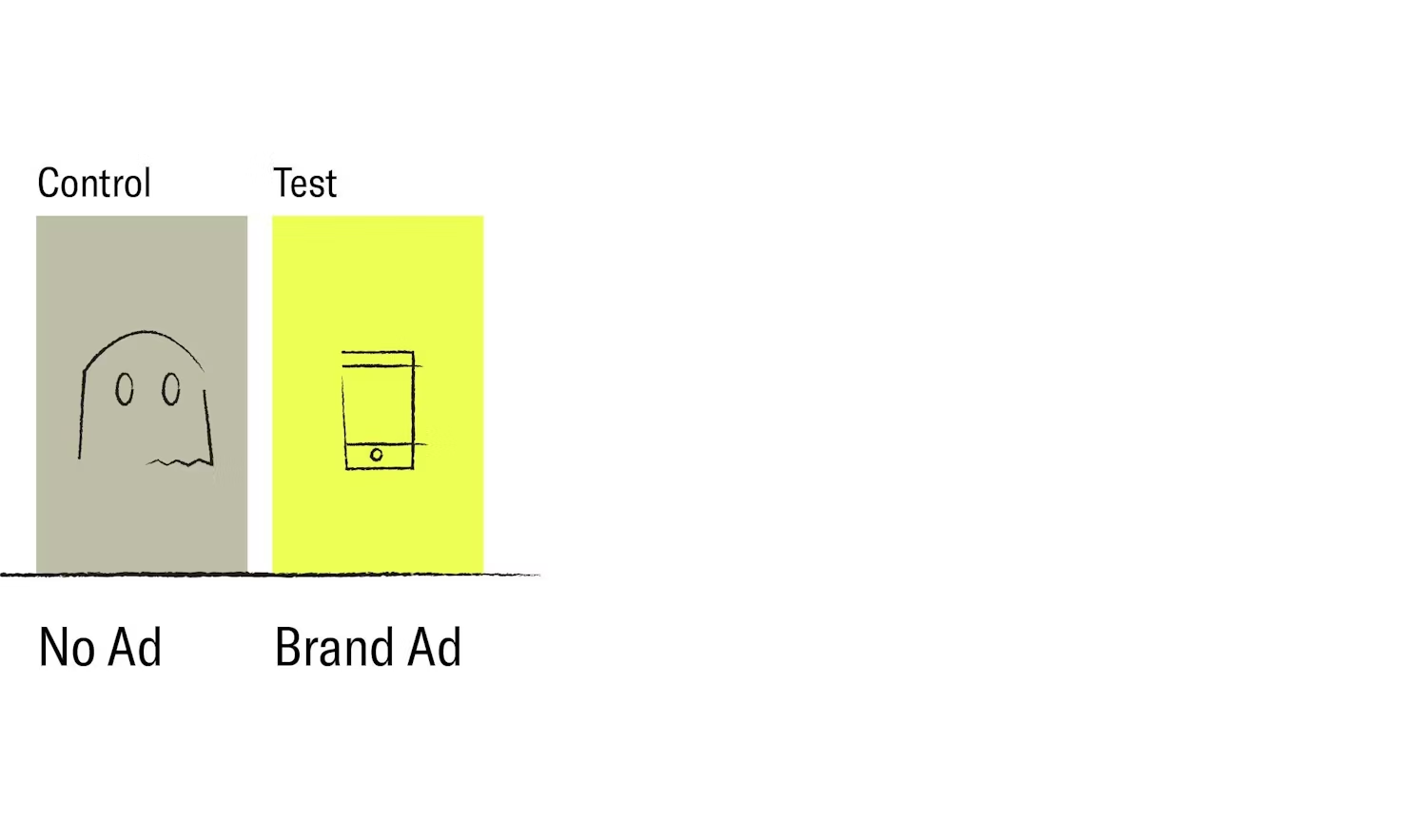

Incrementality comes from science, for example in vaccination tests using Randomized Control Trials (RCT) to check the effectiveness of the vaccine. In the incrementality test procedure, users are randomly divided into a test group and a control group. The test group sees the advertising campaign, the control group does not - then the performance of the two groups is compared. In this experiment, the higher the conversion rate in the test group in relation to that of the control group, the more effective the marketing campaign was and the greater the so-called uplift (i.e. the increase in users that were brought in by paid advertising).

The concept itself is not new, but its application to advertising, especially in the mobile sector, is. When carrying out this type of experiment, the current problem in the industry is that there is no standardization or written set of rules - every advertiser must first answer a large number of questions in order to use the measurement method successfully.

Execution of the test and validation of the results

The concept of incrementality testing may sound simple, but in practice it requires an intensive study of what must be measured.

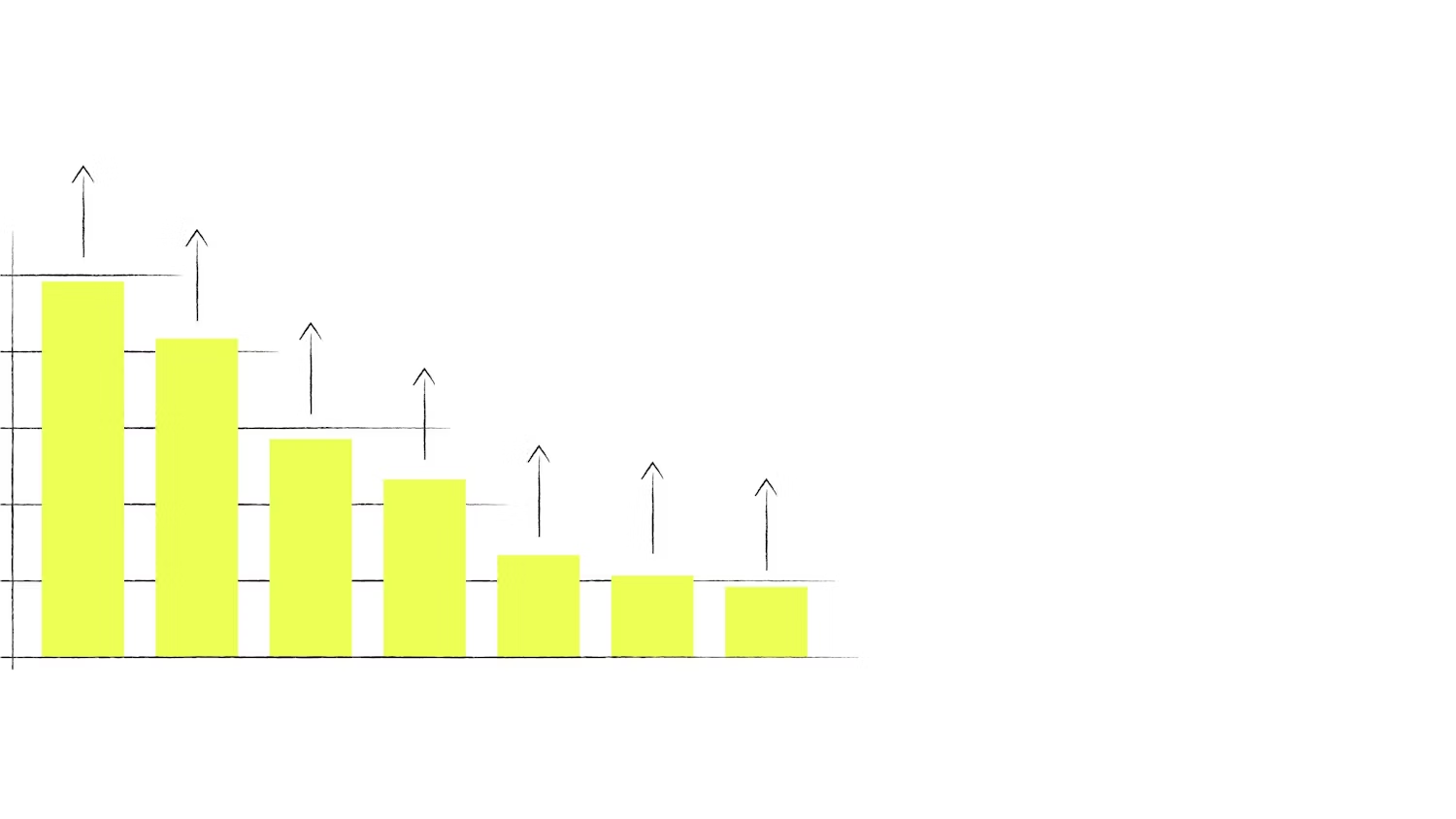

For companies running these tests for the first time, it is a matter of finding the best setup and defining the users they want to reach. Is it about people who stopped opening the app a certain time ago (about X months / X days ago) or about people who are still active? How big is the test group and how big can the control group be in relation to the total target group? How long does the test have to run in order to achieve meaningful results?

In addition to segmentation, it's important to note that an advertiser often addresses users multiple times in their marketing mix. Therefore, it's crucial to ensure randomization takes place at the user level so the users stay in either the test group or the control group and the results are not falsified from the outset due to overlapping.

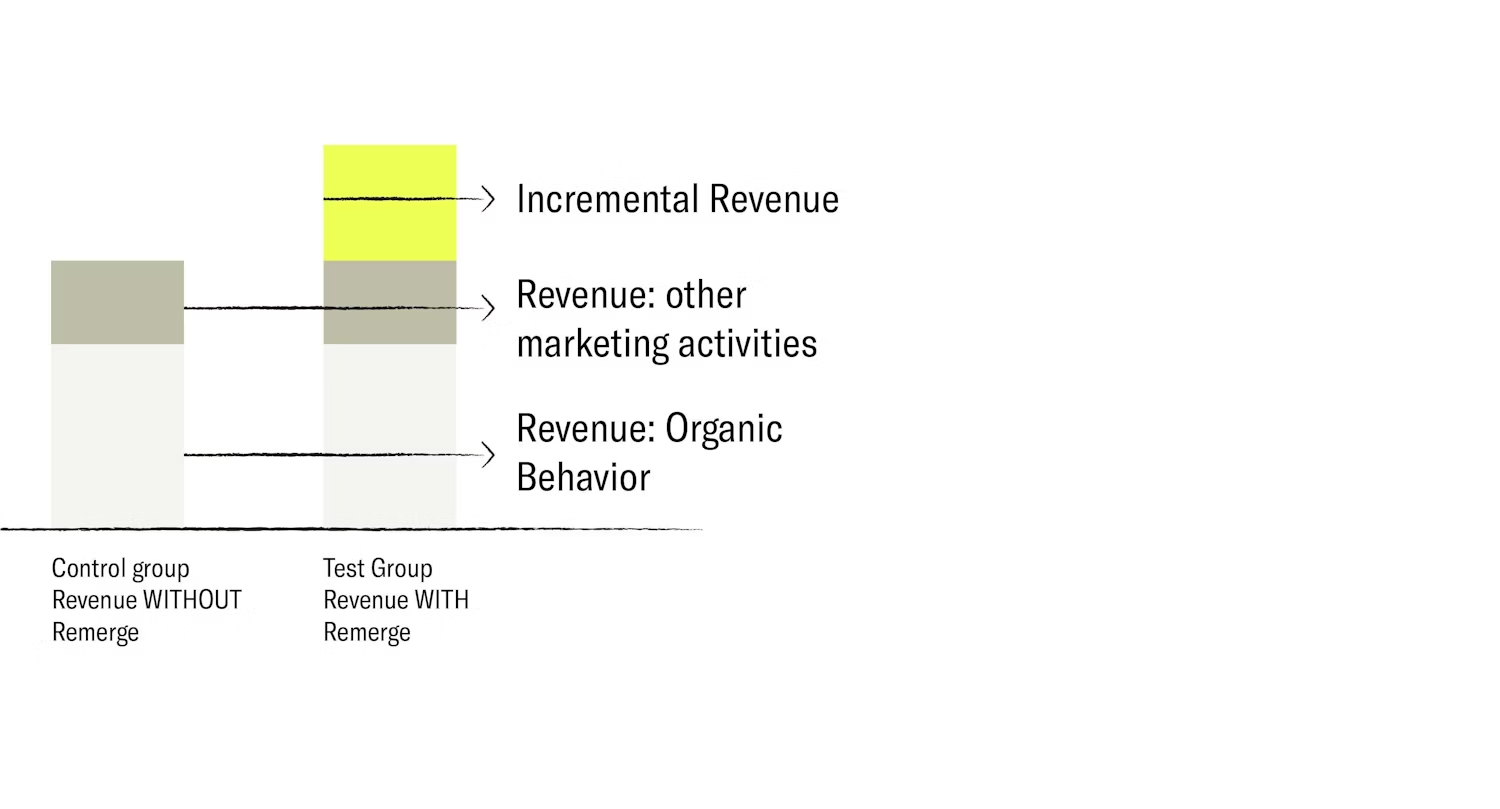

To evaluate the incremental value of a retargeting campaign, for example, the control group includes all conversions and sales generated through organic installations and viral effects, but also those generated through other marketing activities, including user acquisition campaigns, TV ads, and offline - or out-of-home campaigns. The test group is exposed to the retargeting adverts.

To carry out the group allocation properly for an incrementality test, advertisers must clearly define what they mean by a conversion. For example, visiting a homepage, adding an item to a cart, a purchase, and entering the checkout are all different types of conversions. While everyone is part of the sales funnel, every step could be a metric to consider. It's fundamental to focus on the KPIs that should be checked.

Why incrementality testing is a must-have

Incrementality testing gives advertisers a new, holistic perspective for assessing the true value of their app advertising campaigns, and provides answers as to which campaigns were most effective and which generated more sales. It is also a solution for cannibalization worries: in the case of retargeting, for instance, it addresses marketers' doubts about whether their campaigns could eat up their organic conversions.

But not only that: the insights gathered from incrementality tests about target groups and campaigns represent valuable information that can be used as a basis for optimizing an entire paid advertising strategy.

This has become even more relevant in the wake of Apple's privacy-centric iOS update and the introduction of its App Tracking Transparency framework. With a view to protecting personal data, advertisers no longer receive a user's device ID (IDFA) by default, thus making previous attribution models much more limited. If individual users cannot be tracked, it becomes difficult to assign conversions to a specific channel. With traditional forms of attribution losing their accuracy and granularity, advertisers will begin to place more emphasis on the real value of their campaign activities.

Incrementality measurement is possible with the use of aggregated data and econometric models. This allows advertisers to understand the relationship between ad spend and revenue and is the only way to reduce the risk of ineffective budget spend for app marketing campaigns.

"Cum hoc ergo propter hoc", or in other words, correlation is not causation. Incrementality testing provides the answer to which causes which, highlighting scientifically proven causality for campaigns and giving mobile marketers a decisive advantage.