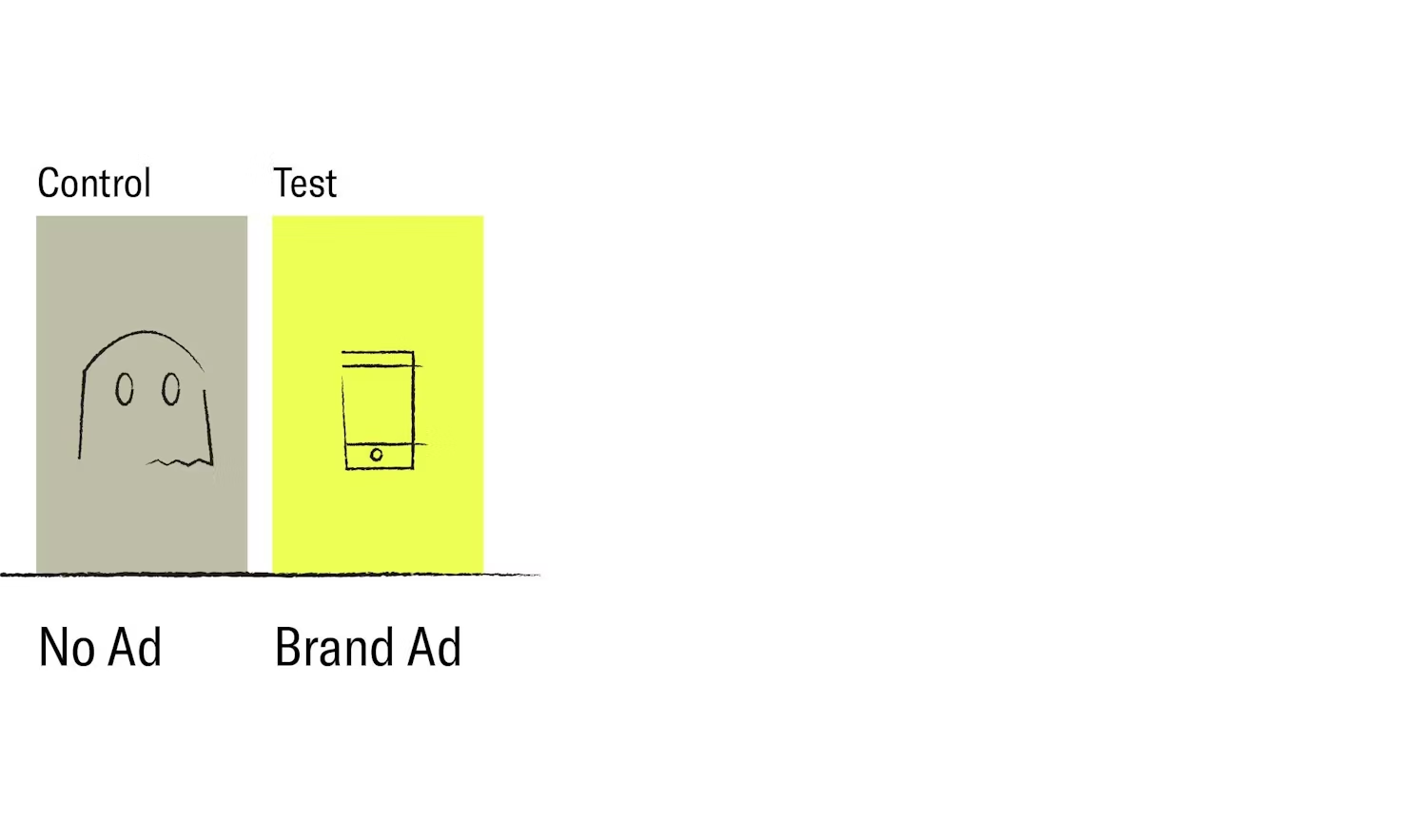

In October, The Trade Desk released a video ad in which they used an analogy that likens trick-or-treating to display media buying. In the advertisement, advertising giants like FB are portrayed as walled-gardens in which children have limited houses from which they can get candy. Conversely, programmatic platforms, like the Trade Desk, are portrayed as the universe of possible homes.

The Trade Desk Video Ad

It's an interesting analogy, with some truth. While walled-garden platforms like Facebook provide scale, one could argue (and The Trade Desk does argue) - that their scale is limited in scope because they are a single publisher with a few properties. One of the greatest benefits of programmatic advertising, conversely, is the access to millions of publishers, as well as the autonomy to make real buying decisions regarding where an ad is placed.

There's a problem with that argument on its own though, because in this commercial the Trade Desk essentially depicts a world in which all programmatic DSPs are scalable. The reality however, is that scale has been a buzzword for far too long, and the nuance matters.

Understanding scale

A simple definition of scale, in the context of programmatic advertising, would center on a DSP's ability to reach many eligible consumers. The industry, thankfully, has a standardized form of measurement for scale called, QPS, or, queries per second. Essentially, QPS tells us how many opportunities a certain bidder has to serve an ad per second. More specifically, this measurement provides a sense of the infrastructure a DSP has, as well as the diversity of supply partners integrated.

Let's use a tangible example to demonstrate scale. Let's say an advertiser is choosing between two DSPs to run a retargeting campaign, DSP A has QPS measuring 2 million, and DSP B has QPS measuring 3.3 million. Both DSPs, in this instance, are above the industry average in QPS, and I'd argue the choice is somewhat obvious. If you have a target audience of 100,000 users, DSP B should have a 50%+ higher chance of reaching those users.

Of course, this example is an oversimplification of how buyers choose their partners. It doesn't take into account customer service, experience, creative suite, etc., but there's a challenge in buying based on these non-QPS attributes. If we were to scatter plot differentiation of possible attributes for a DSP, we'd likely see that differences in "creative services" and "experience" between different bidders would all land relatively close to each other. As a result, the advertiser mentioned above will frequently choose DSP A, or even DSP C with scale measuring 750K QPS. It's an unfortunate manifestation of the consequences that arise when every platform's website says "the most scale, with the best machine learning, and the best advertisers, and the best team." The nuance of scale is lost in this context, but perhaps it's lost because QPS (as a number) isn't enough.

How can we examine scale?

If QPS is insufficient on its own, as a method for examining scale, then we need to explore other facets of a DSP that allow it to bid at scale: specifically, supply diversity and infrastructure.

One of the Trade Desk's core arguments in this commercial was that walled gardens provide a lack of publisher diversity, while programmatic DSPs offer a wide variety of publishers. It's a reasonable argument, however, diversity of supply is not binary. It's not a case of "you have it or you don't," rather, diversity of supply varies dramatically from one DSP to another, and those DSPs with higher volumes of supply integrations provide a greater service.

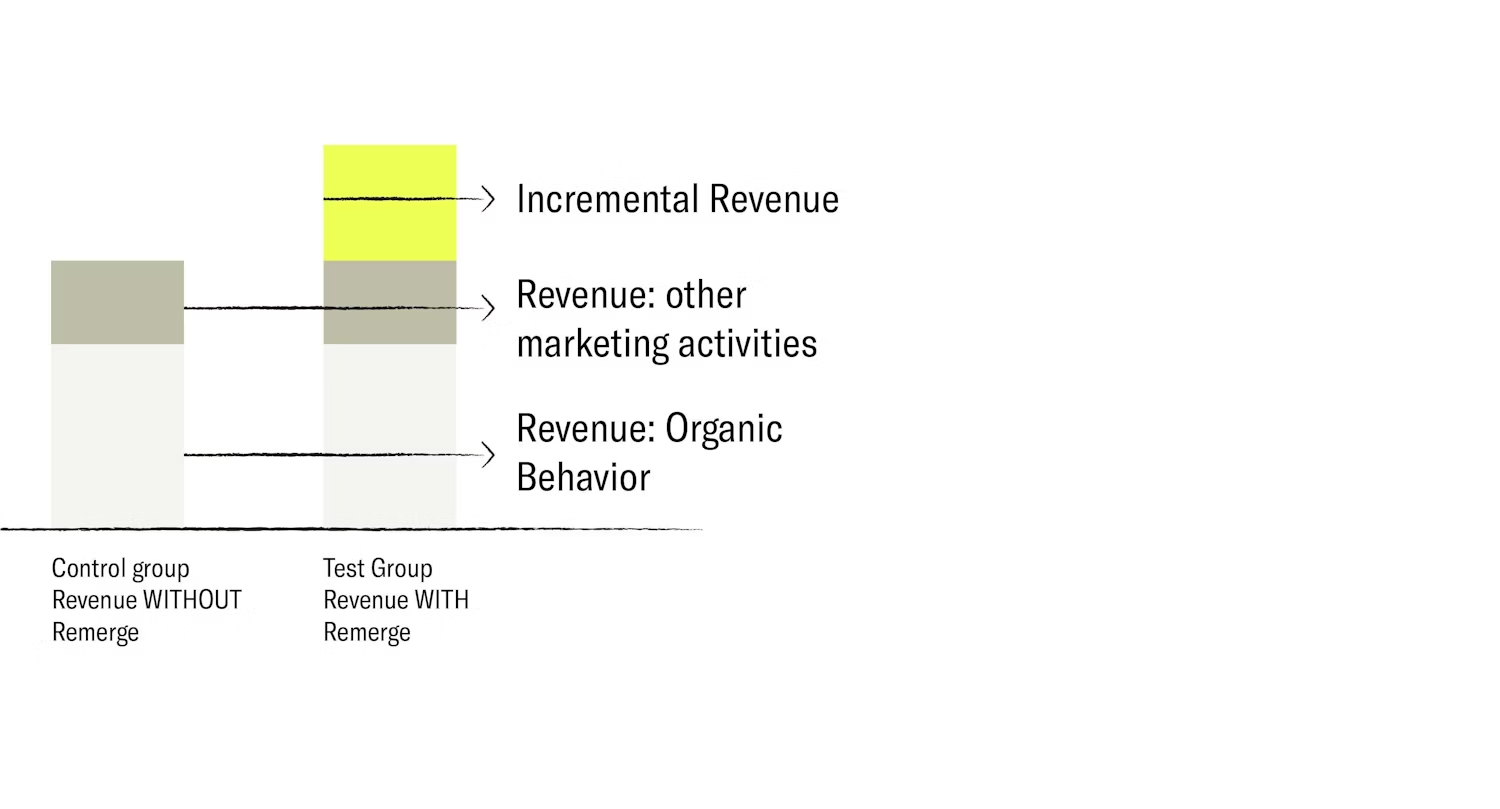

For example, let's revisit the retargeting scenario from before, but now let's say: DSP A has QPS of 2 million and access to 10 different SSPs while DSP B has QPS of 3.3 million and access to 20 different SSPs, including the same 10 as DSP A. Leaving QPS aside, if the target audience is 100K users, then DSP B is again an obvious choice.

Not only will DSP B have a greater opportunity to find more of the Advertiser's users, but it will have a significantly higher volume of data points against which it can optimize. A DSP with more supply partners can better optimize for attributes like supply partner, publisher, creative iteration, creative type, OS version, bid rates, frequency, and so on. A DSP with more supply partners can reduce diminishing returns for an advertiser by offering different formats and publishers through which they can approach an audience.

Another important optimization process relates to the traffic pricing - with higher scale, a DSP can observe more auction outcomes and learn the market prices for different traffic components more efficiently, ostensibly it can buy the same amount of traffic at a lower price and yield better performance results for the advertiser. This capability has become far more important since most programmatic supply has transitioned to 1st price auctions this year. In a second price auction, if you bid too high you simply pay the price of the second-highest bid; while in a first-price auction you can end up overpaying for the same impression and wasting an advertiser's money.

Therefore, if we make the argument that our goal is to "find the right user, in the right place, with the right ad, at the right price," then variation in supply partners must matter. And while high diversity of supply is a straightforward concept, achieving variety at scale requires robust infrastructure which in turn requires money.

A high percentage of DSPs today leverage Amazon Web Servers to power their infrastructure and bidding. The challenge, however, is that the cost of scaling up your capacity with cloud infrastructure, like AWS, is extremely high; so if a DSP wants to increase its supply diversity, or bid request volume from a certain SSP, they need to pay significant money for their AWS. To fund these necessary investments, these DSPs will either need more advertisers, or higher margins associated with their existing advertisers to make up the cost.

Alternatively, some DSPs invest in building their own infrastructure, and manage their own custom hardware and network, optimized for their needs. As a result, their costs are dramatically lower compared to those using AWS, and they can extend those cost savings to their clients in the form of lower traffic costs and subsequently lower cost per conversion. These DSPs can easily toggle new supply partners, increase bid rates on certain SSPs, and provide a service to their advertisers that has greater upside for scale at lower cost.

Ultimately, robust infrastructure will yield higher bid rates and a wider variety of supply access, which in turn will manifest as high QPS. That being said, because QPS is not an important enough buying factor today, advertisers should investigate these attributes more thoroughly.

Why is scale particularly important in the future?

With any content written today, there's an inherent caveat of "will this matter in the future?" Imagine a future in which we can no longer target device IDs. A future where look-alike models, device-graphs, exclusion targeting and retargeting are not possible for the majority of app users (Of course, we don't know what the future will look like, but let's just assume most consumers won't opt-in to ad-tracking). In this version of the future, scale will be the most important attribute available to advertisers.

In today's programmatic world we bid aggressively on specific IDFAs based on data sets we have at our disposal. We have highly dynamic bidders that enable us to reach specific consumers with specific ads, and through conversion rate prediction and optimization, we can apply appropriate CPM bids to win the opportunity to serve an ad. If however we remove the IDFA, that ability to apply intelligent bidding at the user-level vanishes, and we're left with a landscape that has much less certainty.

If an advertiser has little certainty of the expected outcome from serving an ad, then that advertiser cannot afford to pay a high price for that ad. However, that same advertiser needs to reach prospective and existing users to grow and sustain their business- they need to drive outcomes similar to what they see today, but at a lower cost. So, what we will eventually arrive at is a landscape in which broad, high scale, low-cost bidding will provide the highest level of upside to advertisers.

Imagine this scenario

And since we started this piece with an analogy, let's end with one as well to illustrate scale in a world with low access to the IDFA: Imagine you wanted to buy an affordable house today. You'd likely start the process on as many platforms as possible, like Zillow, Redfin, Realtor, etc., and, through the information provided on these platforms, you would physically attend open houses for properties that matched your specific criteria. If you prospect for homes in this manner long enough, eventually, you'll get lucky and find an affordable property.

Now imagine these platforms didn't exist- how would you buy a house?

You'd likely need to drive around your desired location with an eye out for "for sale" signs until you found an open house. However, because you'd be operating on a low budget, you're going to need to find a high volume of open houses until you arrive at one suited for your needs, and one where you aren't outbid.

It's a laborious process, but you'll have a much easier time finding the right house than a careless-home-buyer who's walking around neighborhoods.

In this scenario, you are certainly both home-buyers, but your ability to buy the right home at the right price is not the same.